In some scenarios/applicatons, where the precision may not be so critically important but the speed (performance) is, you may be willing to sacrifice some extent of accuracy for the speed.

In neutral networks, where the math function  where n is usually small (less than 2, for instance), you can avoid the expensive exp() provided by math.h (for other programming languages, similar inbuilt system functions are provided)

where n is usually small (less than 2, for instance), you can avoid the expensive exp() provided by math.h (for other programming languages, similar inbuilt system functions are provided)

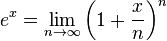

The  (exponential function) can be considered as the following:

(exponential function) can be considered as the following:

In practice, n cannot approach to the infinity but we can achieve a relatively good accuracy by using a large n.

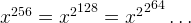

For example, if we put  , then we can multiply

, then we can multiply  by itself 8 times due to the fact

by itself 8 times due to the fact

With this in mind, we can come up with the following approximation:

1 2 3 4 5 6 7 | inline double exp1(double x) { x = 1.0 + x / 256.0; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; return x; } |

inline

double exp1(double x) {

x = 1.0 + x / 256.0;

x *= x; x *= x; x *= x; x *= x;

x *= x; x *= x; x *= x; x *= x;

return x;

}We can also multiply a few more times, to increase the accuracy.

1 2 3 4 5 6 7 8 | inline double exp2(double x) { x = 1.0 + x / 1024; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; x *= x; return x; } |

inline

double exp2(double x) {

x = 1.0 + x / 1024;

x *= x; x *= x; x *= x; x *= x;

x *= x; x *= x; x *= x; x *= x;

x *= x; x *= x;

return x;

}Now, you have the pattern, but for now, we need to test how accurate these approximations are:

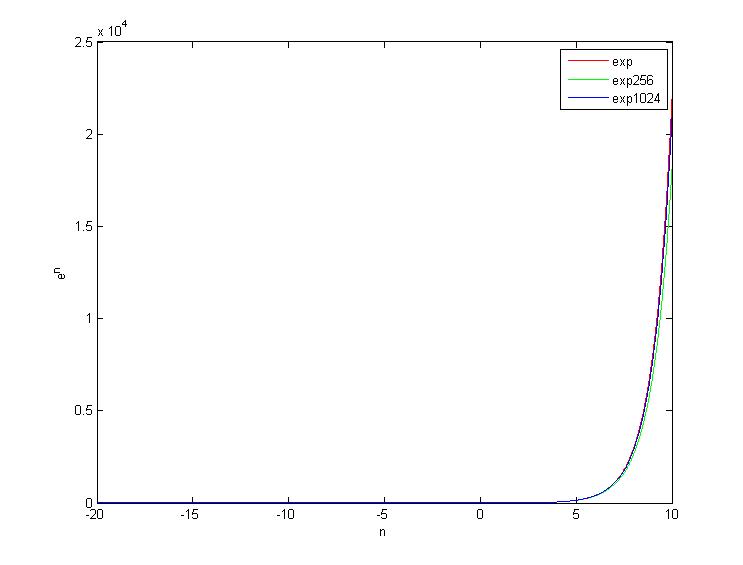

The above plots 3 curves, which are the exp provided by math.h, the exp 256 and the exp 1024. They show very good agreement for input smaller than 5.

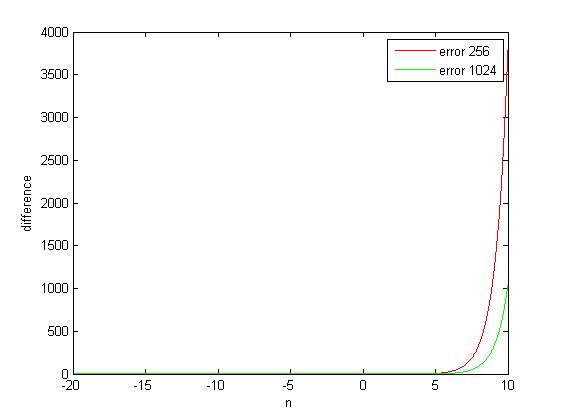

We plot the difference to make it easier to see.

Wow, it really can be a faster alternative if the required input range smaller than 5. For negative inputs, the difference won’t be so noticeable because the value itself is so tiny that can’t be observed visually in graph.

The exp 256 is 360 times faster than the traditional exp and the exp 1024 is 330 times faster than the traditional exp.

–EOF (Coding For Speed) —

loading...

Next Post: Counting the number of Leading Zeros for a 32-bit Integer (Signed or Unsigned)

I did some tests of these functions, however the do not seem to be much faster than the exp function from the library. I tested 70 million calls to each one of the functions and t yielded the next results

library time

time 2.21931

e2 time

time 2.06819

e1 time

time 1.65665

I can see at most a two times increase in performance, how did you tested that it was 300 times faster ?

I think I used codeblocks with gcc compiler…

Benchmark Time(ns) CPU(ns) Iterations

————————————————–

bench_math_exp/1 95 94 7241354

bench_math_exp/10 95 95 7499946

bench_math_exp/15 94 94 7500027

bench_math_exp/20 94 94 7500027

bench_exp256/1 17 17 40384693

bench_exp256/10 17 17 41176471

bench_exp256/15 17 17 39622567

bench_exp256/20 17 17 40384460

bench_exp1024/1 10 10 72413543

bench_exp1024/10 9 9 65624795

bench_exp1024/15 10 10 74999464

bench_exp1024/20 9 9 72413543

exp of 1, 10, 15 and 20, using google benchmark and -O3, it is odd that exp1024 is faster than exp256, but if i use -O0, exp1024 is slower and i don’t know why this is happening.

well, it’s broken the whitespaces .-.

A good idea for an embedded system where a math library is not avaliable. Not practical in a present day PC

Thank you! I was looking for a way to speed up the expensive exp(-x^2) operation in the non-local means denoising algorithm for image processing, where x represents patch similarity. This approximation worked very well. If two patches are similar, then x is small, and the approximation is very accurate. If x is larger than some threshold, then the patches are dissimilar, and so x is large and exp(-x^2) can be approximated as zero directly. (In fact, this latter approximation is necessary as otherwise the contributions from dissimilar patches build up because the approximation is poorer and there are significant artefacts.)

I tested this with n=1024 on an 3715*2527 image with patch 7*7 and search window 21*21 and this reduced the computation time from 307.87 s to 15.0171 s when using a naive NLM calculation, so that’s a 20.5 times speed-up. I didn’t get the 300+ times speed-up you mentioned (I have more going on that just exp calculations), but I’m still pleased with this.

If you have suggestions for an even faster calculation, I am eager to know. (I need to avoid a look-up table as the discretization destorys the data).